Silent Observer

Yann LeCun announced he is leaving Meta. That doesn’t feel particularly surprising. The press has noted that he’s been sidelined inside the company for years. It also makes sense given that LeCun has been steadily building his own public momentum, building his brand.

Recently, a friend DMed me a photo of LeCun after his talk at Pioneer Works, a sold-out, wall-to-wall appearance. His blunt message: LLMs are not the future.

To this I initially rolled my eyes and replied “What are you talking about?” But then I ran into another friend, a few days later, who was talking about the same, so I looked it up.

LeCun has declared that “world models”, not language models, will define the next era of AI. He is widely rumored to be starting a new company built entirely around that vision.

As I understand it, world models are observant models. Unlike language models, which learn patterns of text, these systems are visual and abstractly sensory. “They don’t process data pixel-by-pixel the way generative models do” accoding to LeCun. Instead, they learn through broad, compressed representations, higher-level concepts rather than raw sensory noise.

A key component of this is LeCun’s Joint-Embedding Predictive Architecture (JEPA), which learns by predicting information in a compressed embedding space rather than reconstructing images from scratch. In his co-authored paper “Self-Supervised Learning from Images with a Joint-Embedding Predictive Architecture” (https://arxiv.org/abs/2301.08243), he describes a model that learns directly from images without labels, forcing it to build a semantic understanding of the world.

As he put it at a recent MIT symposium:

“Within three to five years, this, world models, not LLMs, will be the dominant model for AI architectures, and nobody in their right mind would use LLMs of the type we have today.”

Instead of guessing the next word,JEPA tries to predict the meaning of missing parts of an image. That forces the model to learn spatial structure, physical relationships, object permanence, and causal patterns.

These are the building blocks of real-world understanding.

When a human is born, it does not know language. It knows images, sensations, emotional patterns, movement, texture, light. All living things must build a sense of space, weight, cause and effect to survive. Language arrives later.

In our earliest years we are focused on building an internal, predictive understanding of the world. To train a human mind on only language in a black box for its entire development, is akin to training an artificial mind inside a sealed linguistic chamber. It may never develop a true, intuitive grasp of the world.

When I walk through the Metropolitan Museum of Art, I marvel at the proof of humanity: beautiful drawings pulled from tombs and caves, stories carved into stone through interpretations of mass and shape. I do not speak their language, yet I understand the ideas and lives they depict. This understanding happens without words.

Perception is the root of meaning.

In physics, this idea isn’t even radical. Observation is foundational. Quantum theory, especially the observer effect , suggests that observation itself shapes the structure of reality. Does anything exist if not observed? In that sense, LeCun’s argument feels almost inevitable: cognition begins with perception. Existence with being observed. This is necessary for worlds to collide.

“Instead of using traditional methods, a JEPA can use quantum computing to represent information directly in the amplitudes of a quantum state” Authorea paper.

And considering the advancements recently made in quantum research and its application to AI, LeCun’s prediction makes even more sense. Physics is abstract, but it is the underlying map of the universe. If we are to, in essence, align a new universe with our own, it will need to include more than language alone.

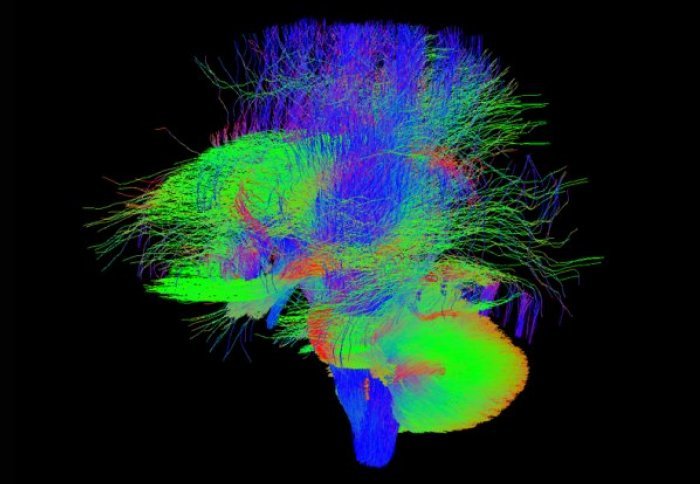

Scan of an infant’s brain